Smartphones & Co.

Best Dual Sim Phones – Everything You Need To Know

When dual sim phones were being produced during the mid-2000s, most people considered them to be substandard devices. However, all of these would change a […]

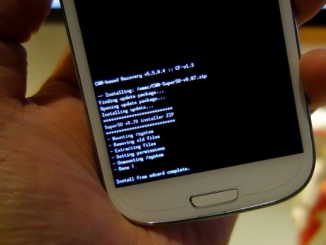

Android Recovery Stick: What Is It And How Does It Work?

Need to recover deleted data from your android device? Use an Android Recovery Stick. It will find out all the data that’s on your android […]

Extendable selfie stick – All you need to know

Hi guys, today we’re going to talk about a useful but simple tech gadget: the extendable selfie stick. Introduction This gadget is perfect for you, […]

Samsung Galaxy Z Flip – a new concept

Samsung Galaxy Z Flip is the newcomer in the Samsung Galaxy. It represents a very big innovation in smartphone design. As you can see by a […]

Best automatic washing machines

Automatic washing machine: an introduction Since their invention in 1930 automatic washing machines became more and more a necessary household appliance. Of course, you would’ve […]